In a previous post we spoke about the difference between ‘Project FPS’ vs ‘Sensor FPS’.

If you have a good understanding of these two definitions, then it’s about time to dig deeper in ‘Project FPS’ often referred to as ‘Frame Rate’.

Definition

The term ‘frame rate’ refers to the number of individual frames or images that are ‘displayed / played back’ per second. Since 1927, the standard frame rate for cinematography film has been 24 fps (frames per second). For TV the standard is 30 fps for NTSC (National Television System Committee – the system used in North America, Japan and many other areas around the world), 25 fps for PAL (Phase Alternating Line – the system used in Europe, parts of Africa and SE Asia).

Science

Our brains can make a continuous moving picture with as little as 16 fps and bottlenecks in perceiving notabel differences around 60 fps.

But to understand this a little bit better without becoming too scientific, we have to bring more definitions in the game, namely;

- FLICKER (light intensity pulse)

- ANALYZE.

Human sight and perception is a strange and complicated thing, and it doens’t quite work like it feels.

Detecting motion (analyze) is not the same as detecting light (flicker). Also, different parts of the eye perform differently. The centre of your vision is good at different stuff than the periphery. And another thing is that there are natural, physical limits to what we can perceive. It takes time for the light that passes through your cornea (front part of your eye) to become information on which your brain can act, and our brains can only process that information at a certain speed.

Numbers

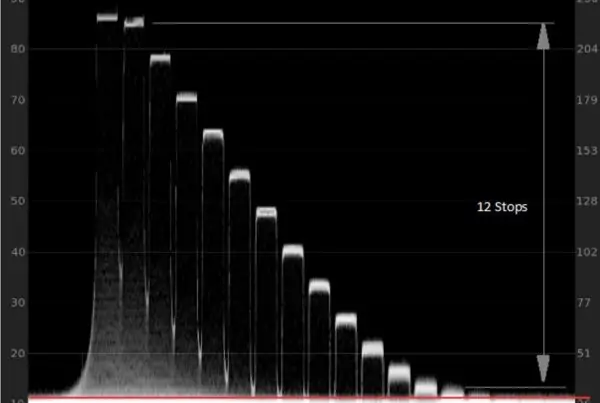

The first thing to think about is flicker frequency. Most people perceive a flickering light source as steady illumination at a rate of 50 to 60 times a second.

Opposed to Flicker, eyes seem not to be capable of perceiving more than 12 passing frames as distinct individual images (analyze).

To overcome the ‘Flicker’ vs ‘Analyze’ issue without maximizing the frame rate we can add motion blur. Motion blur is a perfect way to merge neighboring frames to reduce Flicker or Jitter etc. caused by fast moving objects. More about this in our upcoming blog post ‘Shutter Speed vs Shutter Time‘

Also in analogue projection style we can ‘flash’ one frame multiple times, to make the interval between two frames less noticeable.

24 FPS

24 fps is not the minimum required for persistence of vision – but it was a rate that gave a quality effect at a reasonable cost.

Higher frame rates would require more frames meaning filmmakers would use more film, same goes for harddisk space in digital cinematography.Higher frame rates would require more frames meaning filmmakers would have to use more film. The same goes for harddisk space in digital cinematography.

History

Although greater frame rates would produce better persistence of vision, 16fps became the unofficial standard for silent films. It was enough to create the illusion of motion, and studio execs were not losing cash with every turn of the hand crank.

24fps came to life during the start of the ‘sync sound era’ around 1927.

24fps was chosen because a new modern standardization of frame rate had to be initiated due to the introduction of sound. Also 24fps was chosen because of math; it is an easily divided number, and editors would be able to work out specific time cuts based on the number of frames. Twelve frames would be half a second, six frames would be a quarter of a second, and so forth.

note: if every frame was ‘flashed twice’ during analogue projection you had a flicker rate of48fps, which was according to T Edison the right speed to eliminate perceiving any flicker.

Esthetic

The esthetic of 24 FPS became culturally ingrained, and is often referred to when we speak about the so called ‘cinematic look’. Up until today most Films are being made to be screened at 24 FPS.

Higher Frame Rates?

'Higher Frame Rates than 24 will look more realistic.'Most Cinema-goers have been conditioned to the look and feel of a 24 fps film and will reject any fps thats higher than 24 (look at The Hobbit 2012).

The psychological concept says that:

If people are seeing something artificial, which starts to approach something looking real, they begin to inherently reject it.